原图

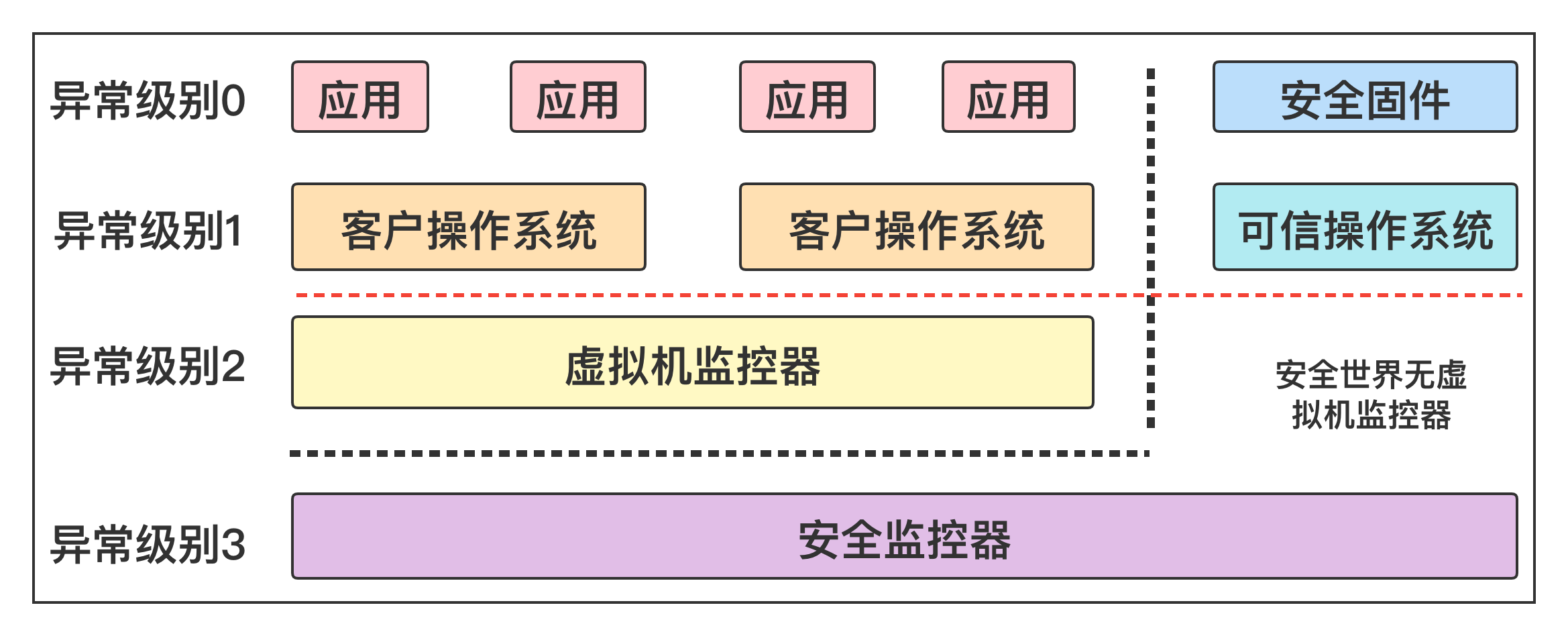

通常 ARM64 的进程执行在 EL0 级别,内核执行在 EL1 级别。

虚拟机是现在流行的虚拟化技术,在计算机上创建一个虚拟机,虚拟机里可以运行一个操作系统。常用的开源虚拟机管理软件是 QEMU,QEMU 支持基于内核的虚拟机 KVM,KVM 的特点是直接在处理器上执行客户机的操作系统,所以虚拟机的执行速度很快。

ARM64 架构的安全扩展定义了两种安全状态,正常世界和安全世界,两个世界只能通过异常级别 3 的安全监视器切换。

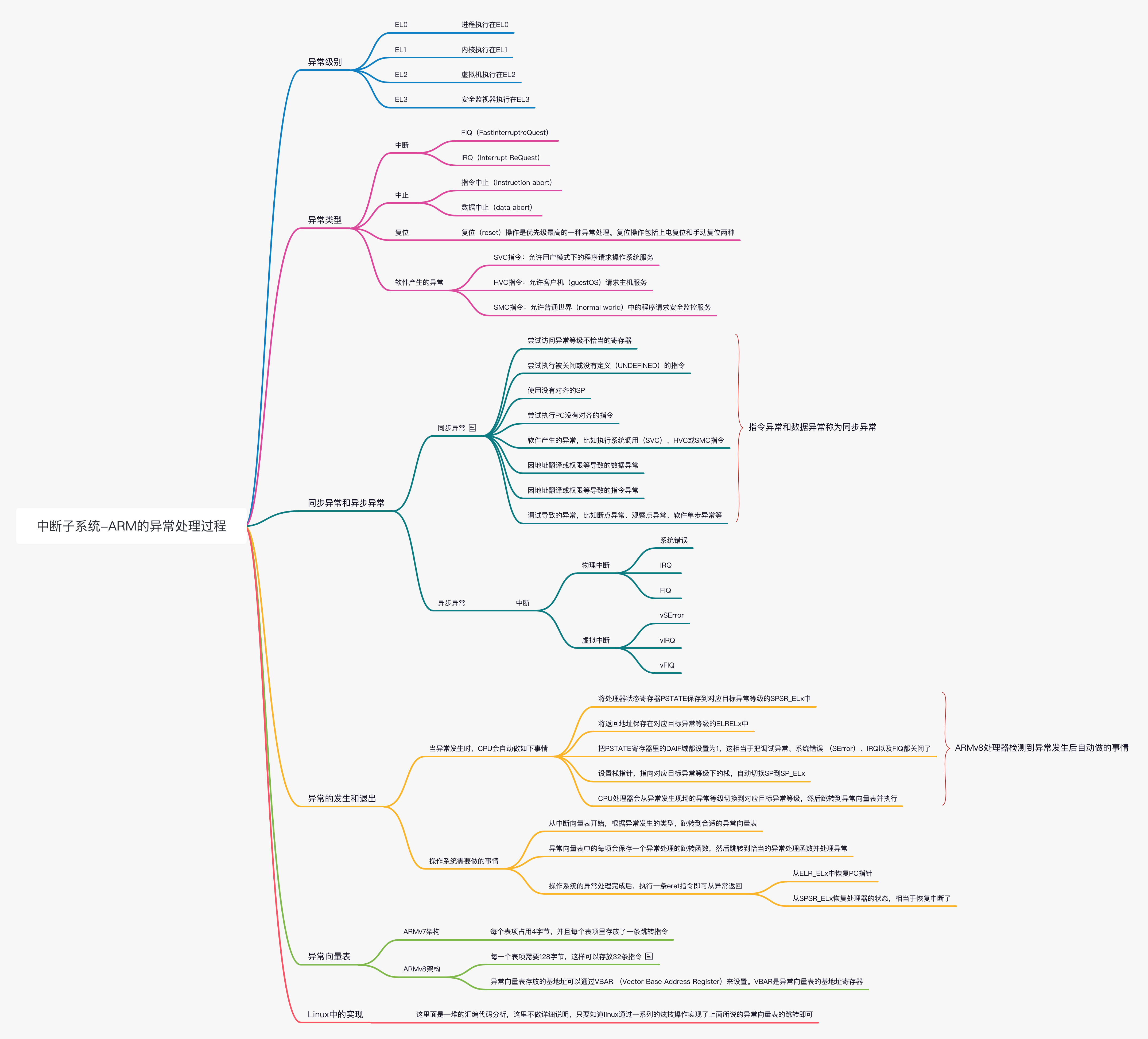

在 ARM 处理器中,FIQ(Fast Interruptre Quest)的优先级要高于 IRQ(Interrupt ReQuest)。在芯片内部,分别由 IRQ 和 FIQ 两根中断线连接到中断控制器再连接到处理器内部,发送中断信号给处理器。

中止(abort)主要有指令中止(instruction abort)和数据中止(data abort)两种,通常是因为访问外部存储单元时发生了错误,处理器内部的 MMU(Memory Management Unit)能捕获这些错误并且报告给处理器。指令中止是指当处理器尝试执行某条指令时发生了错误,而数据中止是指使用加载或存储指令读写外部存储单元时发生了错误。

复位(reset)操作是优先级最高的一种异常处理,复位操作包括上电复位和手动复位两种。

ARMv8 加构提供了 3 种软件产生的异常。发生这些异常通常是因为软件想尝试进入高的异常等级。

SVC 指令:允许用户模式下的程序请求操作系统服务

HVC 指令:允许客户机(guestOS)请求主机服务

SMC 指令:允许普通世界(normal world)中的程序请求安全监控服务

ARMv8 架构把异常分成同步异常和异步异常两种。同步异常是指处理器需要等待异常处理的结果,然后再继续执行后面的指令,比如数据中止发生时我们知道发生数据异常的地址,因而可以在异常处理函数中修复这个地址。常见的同步异常如下。

尝试访问异常等级不恰当的寄存器

尝试执行没有定义(UNDEFINED)的指令

使用没有对齐的 SP 或执行没有对齐的 PC 指令

软件产生的异常,比如执行系统调用(SVC)、HVC 或 SMC 指令

因地址翻译或权限等导致的数据异常或指令异常

调试导致的异常,比如断点异常、观察点异常、软件单步异常等

中断发生时,处理器正在处理的指令和中断是完全没有关系的,它们之间没有依赖关系因此,指令异常和数据异常称为同步异常,而中断称为异步异常。常见的异步异常包括物理中断和虚拟中断。

物理中断分为系统错误、IRQ、FIQ。

虚拟中断分为 vSError、 vIRQ、vFIQ。

当异常发生时,CPU 会自动做如下一些事情。

将处理器状态寄存器 PSTATE 保存到对应目标异常等级的 SPSR_ELx 中

将返回地址保存在对应目标异常等级的 ELRELx 中

把 PSTATE 寄存器里的 DAIF 域都设置为 1,这相当于把调试异常、系统错误 (SError)、IRQ 以及 FIQ 都关闭了。具体异常原因需要查看 ESR_ELx

设置栈指针,指向对应目标异常等级下的栈,自动切换 SP 到 SP_ELx

CPU 处理器会从异常发生现场的异常等级切换到对应目标异常等级,然后跳转到异常向量表并执行

上述是 ARMv8 处理器检测到异常发生后自动做的事情。操作系统需要做的事情是从中断向量表开始,根据异常发生的类型,跳转到合适的异常向量表。异常向量表中的每项会保存一个异常处理的跳转函数,然后跳转到恰当的异常处理函数并处理异常。

当操作系统的异常处理完成后,执行一条 eret 指令即可从异常返回。这条指令会自动完成如下工作

从 ELR_ELx 中恢复 PC 指针

从 SPSR_ELx 恢复处理器的状态

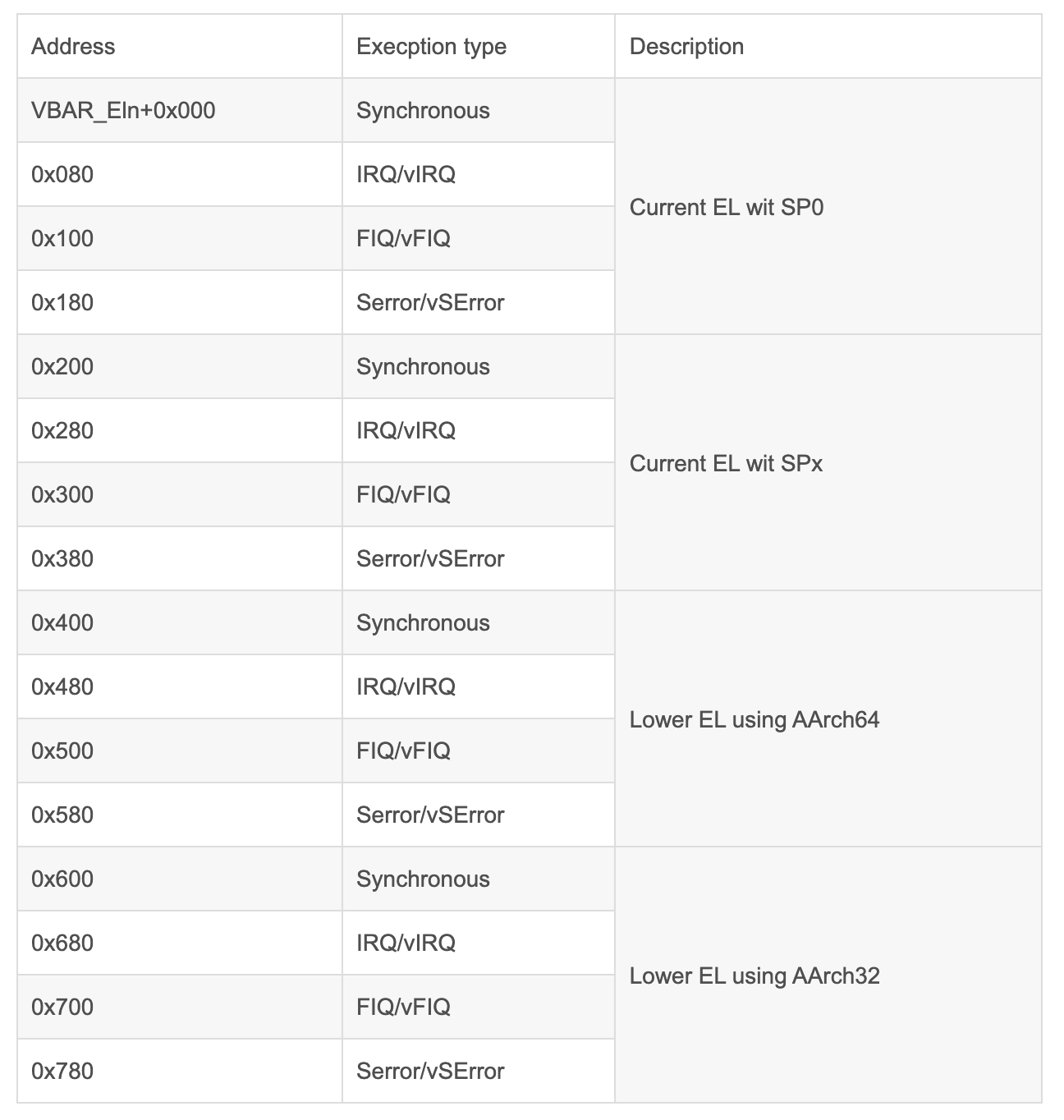

ARMv7 架构的异常向量表比较简单,每个表项占用 4 字节,并且每个表项里存放了一条跳转指令。但是 ARMv8 架构的异常向量表发生了变化。每一个表项需要 128 字节,这样可以存放 32 条指令。注意,ARMv8 指令集支持 64 位指令集,但是每一条指令的位宽是 32 位宽而不是 64 位宽。

在下表中,异常向量表存放的基地址可以通过 VBAR (Vector Base Address Register)来设置。VBAR 是异常向量表的基地址寄存器。见《ARM Architecture Reference Manual,ARMv8,for ARMv8-A architecture profile v8.4》的 D.1.10 节。

要理解下面这段代码需要先简单了解一下一些汇编语法,下面做简单介绍:

1 2 3 4 5 6 .macro sum from=0, to=5 .long \from .if \to-\from sum "(\from+1)",\to .endif .endm

根据该定义,“SUM 0,5”等效于以下程序集输入:

1 2 3 4 5 6 .long 0 .long 1 .long 2 .long 3 .long 4 .long 5

.macro comm 开始定义一个名为 comm 的宏,它不带参数。

1 2 .macro plus1 p, p1 .macro plus1 p p1

任一语句都开始定义一个名为 plus1 的宏,它带有两个参数;在宏定义中,写“\p”或“\p1”来计算参数。

1 .macro reserve_str p1=0 p2

以两个参数开始一个名为 reserve_str 的宏的定义。 第一个参数有一个默认值,但第二个没有。 定义完成后,您可以将宏调用为 ‘reserve_str a,b’(‘\p1’ 计算为 a,‘\p2’ 计算为 b),或为 ‘reserve_str ,b’(使用 ‘\p1 ’ 计算为默认值,在本例中为 ‘0’,’\p2’ 计算为 b)。

1 .macro m p1:req, p2=0, p3:vararg

以至少三个参数开始一个名为 m 的宏的定义。 第一个参数必须始终指定一个值,但第二个参数不是,而是具有默认值。 第三个形式将被分配在调用时指定的所有剩余参数。

调用宏时,可以按位置或关键字指定参数值。 例如,“sum 9,17”等价于“sum to=17, from=9”。

请注意,由于每个 macargs 都可以是与目标架构允许的任何其他标识符完全相同的标识符,因此如果目标在某些字符出现在特殊位置时对其制作了特殊含义,则可能会偶尔出现问题。 例如,如果冒号 (😃 通常被允许作为符号名称的一部分,但架构特定代码在作为符号的最后一个字符(以表示标签)出现时将其特殊化,则宏参数替换 代码将无法知道这一点并将整个构造(包括冒号)视为标识符,并仅检查此标识符是否受参数替换。 例如这个宏定义:

1 2 3 4 .macro label l \l: .endm

可能无法按预期工作。 调用“label foo”可能不会创建一个名为“foo”的标签,而只是将文本“\l:”插入到汇编源代码中,可能会产生关于无法识别的标识符的错误。

类似的问题可能会出现在操作码名称(以及标识符名称)中通常允许使用的句点字符 (‘.’) 中。 因此,例如构造一个宏以根据基本名称和长度说明符构建操作码,如下所示:

1 2 3 .macro opcode base length \base.\length .endm

并将其作为“opcode store l”调用不会创建“store.l”指令,而是在汇编器尝试解释文本“\base.\length”时产生某种错误。

如果可以使用空格字符,那么这是最简单的解决方案。例如:

1 2 3 .macro label l \l : .endm

字符串“()”可用于将宏参数的结尾与以下文本分开。例如:

1 2 3 .macro opcode base length \base\().\length .endm

Use the alternate macro syntax mode

在替代宏语法模式中,与号字符 (‘&’) 可用作分隔符。例如:

1 2 3 4 .altmacro .macro label l l&: .endm

正确识别伪操作的字符串参数的这个问题也适用于 .irp 和 .irpc 中使用的标识符。

.endm 标记宏定义的结尾。

下面看下 Linux 中 arm64 的异常向量表,在 arch/arm64/kernel/entry.S 文件中有:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 /* * Exception vectors. */ .pushsection ".entry.text", "ax" .align 11 SYM_CODE_START(vectors) # 使用 SP0 寄存器的当前的异常类型的异常向量表 kernel_ventry 1, t, 64, sync // Synchronous EL1t kernel_ventry 1, t, 64, irq // IRQ EL1t kernel_ventry 1, t, 64, fiq // FIQ EL1h kernel_ventry 1, t, 64, error // Error EL1t # 使用 SPx 寄存器的当前异常类型的异常向量表 kernel_ventry 1, h, 64, sync // Synchronous EL1h kernel_ventry 1, h, 64, irq // IRQ EL1h kernel_ventry 1, h, 64, fiq // FIQ EL1h kernel_ventry 1, h, 64, error // Error EL1h # AArch64 下低异常等级的异常向量表 kernel_ventry 0, t, 64, sync // Synchronous 64-bit EL0 kernel_ventry 0, t, 64, irq // IRQ 64-bit EL0 kernel_ventry 0, t, 64, fiq // FIQ 64-bit EL0 kernel_ventry 0, t, 64, error // Error 64-bit EL0 # AArch32 下低异常等级的异常向量表 kernel_ventry 0, t, 32, sync // Synchronous 32-bit EL0 kernel_ventry 0, t, 32, irq // IRQ 32-bit EL0 kernel_ventry 0, t, 32, fiq // FIQ 32-bit EL0 kernel_ventry 0, t, 32, error // Error 32-bit EL0 SYM_CODE_END(vectors)

上述异常向量表的定义和前面那张图是一致的,其中 kernel_ventry 是一个宏,他的实现如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 .macro kernel_ventry, el:req, ht:req, regsize:req, label:req .align 7 #ifdef CONFIG_UNMAP_KERNEL_AT_EL0 .if \el == 0 alternative_if ARM64_UNMAP_KERNEL_AT_EL0 .if \regsize == 64 mrs x30, tpidrro_el0 msr tpidrro_el0, xzr .else mov x30, xzr .endif alternative_else_nop_endif .endif #endif sub sp, sp, #PT_REGS_SIZE #ifdef CONFIG_VMAP_STACK /* * Test whether the SP has overflowed, without corrupting a GPR. * Task and IRQ stacks are aligned so that SP & (1 << THREAD_SHIFT) * should always be zero. */ add sp, sp, x0 // sp' = sp + x0 sub x0, sp, x0 // x0' = sp' - x0 = (sp + x0) - x0 = sp tbnz x0, #THREAD_SHIFT, 0f sub x0, sp, x0 // x0'' = sp' - x0' = (sp + x0) - sp = x0 sub sp, sp, x0 // sp'' = sp' - x0 = (sp + x0) - x0 = sp b el\el\ht\()_\regsize\()_\label 0: /* * Either we've just detected an overflow, or we've taken an exception * while on the overflow stack. Either way, we won't return to * userspace, and can clobber EL0 registers to free up GPRs. */ /* Stash the original SP (minus PT_REGS_SIZE) in tpidr_el0. */ msr tpidr_el0, x0 /* Recover the original x0 value and stash it in tpidrro_el0 */ sub x0, sp, x0 msr tpidrro_el0, x0 /* Switch to the overflow stack */ adr_this_cpu sp, overflow_stack + OVERFLOW_STACK_SIZE, x0 /* * Check whether we were already on the overflow stack. This may happen * after panic() re-enables interrupts. */ mrs x0, tpidr_el0 // sp of interrupted context sub x0, sp, x0 // delta with top of overflow stack tst x0, #~(OVERFLOW_STACK_SIZE - 1) // within range? b.ne __bad_stack // no? -> bad stack pointer /* We were already on the overflow stack. Restore sp/x0 and carry on. */ sub sp, sp, x0 mrs x0, tpidrro_el0 #endif b el\el\ht\()_\regsize\()_\label .endm

.macro kernel_ventry, el:req, ht:req, regsize:req, label:req 定义 kernel_ventry 宏有 4 个参数,其中:req 代表这个参数必须提供。撇开各种宏定义我们看下里面其实主要就是如下三行代码,我们一一分析:

表示把一条指令的地址对其到 2 的 7 次方,即 128 字节。

sub sp, sp, #PT_REGS_SIZE

sp 指针减去 PT_REGS_SIZE,PT_REGS_SIZE 的定义在 arch/arm64/kernel/asm_offset.c 文件中,如下:

1 DEFINE(PT_REGS_SIZE, sizeof (struct pt_regs));

pt_regs 定义如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 struct pt_regs { union { struct user_pt_regs user_regs ; struct { u64 regs[31 ]; u64 sp; u64 pc; u64 pstate; }; }; u64 orig_x0; #ifdef __AARCH64EB__ u32 unused2; s32 syscallno; #else s32 syscallno; u32 unused2; #endif u64 sdei_ttbr1; u64 pmr_save; u64 stackframe[2 ]; u64 lockdep_hardirqs; u64 exit_rcu; };

该结构定义了异常期间寄存器在堆栈上的存储方式。 请注意 sizeof(struct pt_regs) 必须是 16 的倍数(用于堆栈对齐)。

b el\el\ht()\regsize() \label

以 kernel_ventry 1, t, 64, sync 为例将宏展开得到:el1t_64_sync, 下面我们去看看该标号的实现,如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 .macro entry_handler el:req, ht:req, regsize:req, label:req SYM_CODE_START_LOCAL(el\el\ht\()_\regsize\()_\label) kernel_entry \el, \regsize mov x0, sp bl el\el\ht\()_\regsize\()_\label\()_handler .if \el == 0 b ret_to_user .else b ret_to_kernel .endif SYM_CODE_END(el\el\ht\()_\regsize\()_\label) .endm /* * Early exception handlers */ entry_handler 1, t, 64, sync entry_handler 1, t, 64, irq entry_handler 1, t, 64, fiq entry_handler 1, t, 64, error entry_handler 1, h, 64, sync entry_handler 1, h, 64, irq entry_handler 1, h, 64, fiq entry_handler 1, h, 64, error entry_handler 0, t, 64, sync entry_handler 0, t, 64, irq entry_handler 0, t, 64, fiq entry_handler 0, t, 64, error entry_handler 0, t, 32, sync entry_handler 0, t, 32, irq entry_handler 0, t, 32, fiq entry_handler 0, t, 32, error

将 entry_handler 1, t, 64, sync 宏展开,得到如下:

1 2 3 4 5 6 7 8 9 el1t_64_sync: kernel_entry \el, \regsize mov x0, sp bl el\el\ht\()_\regsize\()_\label\()_handler .if \el == 0 b ret_to_user .else b ret_to_kernel .endif

kernel_entry 宏定义如下:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 .macro kernel_entry, el, regsize = 64 .if \regsize == 32 mov w0, w0 // zero upper 32 bits of x0 .endif stp x0, x1, [sp, #16 * 0] stp x2, x3, [sp, #16 * 1] stp x4, x5, [sp, #16 * 2] stp x6, x7, [sp, #16 * 3] stp x8, x9, [sp, #16 * 4] stp x10, x11, [sp, #16 * 5] stp x12, x13, [sp, #16 * 6] stp x14, x15, [sp, #16 * 7] stp x16, x17, [sp, #16 * 8] stp x18, x19, [sp, #16 * 9] stp x20, x21, [sp, #16 * 10] stp x22, x23, [sp, #16 * 11] stp x24, x25, [sp, #16 * 12] stp x26, x27, [sp, #16 * 13] stp x28, x29, [sp, #16 * 14] .if \el == 0 clear_gp_regs mrs x21, sp_el0 ldr_this_cpu tsk, __entry_task, x20 msr sp_el0, tsk /* * Ensure MDSCR_EL1.SS is clear, since we can unmask debug exceptions * when scheduling. */ ldr x19, [tsk, #TSK_TI_FLAGS] disable_step_tsk x19, x20 /* Check for asynchronous tag check faults in user space */ check_mte_async_tcf x22, x23 apply_ssbd 1, x22, x23 #ifdef CONFIG_ARM64_PTR_AUTH alternative_if ARM64_HAS_ADDRESS_AUTH /* * Enable IA for in-kernel PAC if the task had it disabled. Although * this could be implemented with an unconditional MRS which would avoid * a load, this was measured to be slower on Cortex-A75 and Cortex-A76. * * Install the kernel IA key only if IA was enabled in the task. If IA * was disabled on kernel exit then we would have left the kernel IA * installed so there is no need to install it again. */ ldr x0, [tsk, THREAD_SCTLR_USER] tbz x0, SCTLR_ELx_ENIA_SHIFT, 1f __ptrauth_keys_install_kernel_nosync tsk, x20, x22, x23 b 2f 1: mrs x0, sctlr_el1 orr x0, x0, SCTLR_ELx_ENIA msr sctlr_el1, x0 2: isb alternative_else_nop_endif #endif mte_set_kernel_gcr x22, x23 scs_load tsk .else add x21, sp, #PT_REGS_SIZE get_current_task tsk .endif /* \el == 0 */ mrs x22, elr_el1 mrs x23, spsr_el1 stp lr, x21, [sp, #S_LR] /* * For exceptions from EL0, create a final frame record. * For exceptions from EL1, create a synthetic frame record so the * interrupted code shows up in the backtrace. */ .if \el == 0 stp xzr, xzr, [sp, #S_STACKFRAME] .else stp x29, x22, [sp, #S_STACKFRAME] .endif add x29, sp, #S_STACKFRAME #ifdef CONFIG_ARM64_SW_TTBR0_PAN alternative_if_not ARM64_HAS_PAN bl __swpan_entry_el\el alternative_else_nop_endif #endif stp x22, x23, [sp, #S_PC] /* Not in a syscall by default (el0_svc overwrites for real syscall) */ .if \el == 0 mov w21, #NO_SYSCALL str w21, [sp, #S_SYSCALLNO] .endif /* Save pmr */ alternative_if ARM64_HAS_IRQ_PRIO_MASKING mrs_s x20, SYS_ICC_PMR_EL1 str x20, [sp, #S_PMR_SAVE] mov x20, #GIC_PRIO_IRQON | GIC_PRIO_PSR_I_SET msr_s SYS_ICC_PMR_EL1, x20 alternative_else_nop_endif /* Re-enable tag checking (TCO set on exception entry) */ #ifdef CONFIG_ARM64_MTE alternative_if ARM64_MTE SET_PSTATE_TCO(0) alternative_else_nop_endif #endif /* * Registers that may be useful after this macro is invoked: * * x20 - ICC_PMR_EL1 * x21 - aborted SP * x22 - aborted PC * x23 - aborted PSTATE */ .endm

这里不做详细分析了,除了对一些寄存器做基础处理,保存基本和特殊寄存器值外最重要的就是 bl el\el\ht()\regsize() \label()_handler,同样以上面第一个宏定义为例,展开为 el1t_64_sync_handler,这些函数的定义和实现在 linux/arch/arm64/include/asm/exception.h 和 linux/arch/arm64/kernel/entry-common.c 中。

在上面的一系列函数中,其实就是一个中断向量表,及其里面的函数实现,当外部中断发生时,跳转到具体标处运行代码。

https://sourceware.org/binutils/docs/as/Macro.html#Macro https://sourceware.org/binutils/docs/as/

[[LinuxKernelInterruptSummery]]